- Taboola Blog

- Machine Learning

Most products don’t have the luxury of retaining users beyond the first session solely on their solid reputations, and in order to do so, we need to optimize for the best user experience possible.

Have you recently launched a cool new feature you spent so much time on? What data do you use to determine the impact of the feature? Take a look at this blog, this may be just for you!

Ted Lasso has some real-life lessons we can all take in. How is this possible? Take a look!

Why is it important to remove underperforming features to improve the product’s key metrics? Find out here.

We wanted to see if there was a way we could sync our Kubernetes NetworkPolicies dynamically with tools we already use, like Consul and Calico.

Read this article to learn more about what conversions are, how Taboola handle billions of daily events at scale, and how it all presents meaningful data to customers.

Kafka is an open-source distributed event streaming platform and something went wrong while working with it. Let’s see how it was investigated and resolved.

Find out the secrets to how Taboola deploys and manages the thousands of servers that bring you recommendations every day.

Optimize Data Center Health: Taboola employs LSTM Autoencoder for precise anomaly detection, enhancing system performance.

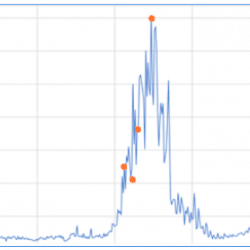

At Taboola, we work daily on improving our Deep-Learning-based content-recommendation model. We use it to suggest personalized news articles and ads to hundreds of millions users a day, so naturally we must stick to state-of-the-art deep learning modeling methods. But our job doesn’t end there – analyzing our results is a must too, and then we sometimes return to our data science roots and apply some very basic techniques. Let’s lay such a problem out. We are investigating a deep model that behaves rather strangely: it wins over our default model for what looks like a random group of advertisers, and loses for another group. This behavior is stable in the day to day, so it looks like there might be some inherent advertisers qualities (what we’ll call – campaign features) to blame for this. You can see a typical model behavior for 4 campaigns below. So we hypothesize that […]